Azure Blob Storage Python SDK:云对象存储 - Openclaw Skills

作者:互联网

2026-04-13

什么是 适用于 Python 的 Azure Blob Storage SDK?

适用于 Python 的 Azure Blob Storage SDK 为云中的对象存储提供了一个全面的客户端库。它允许开发人员存储和检索海量的非结构化数据,例如文本或二进制数据。通过 Openclaw Skills 集成此技能,开发人员可以自动化容器管理、Blob 生命周期操作,并使用 Azure Active Directory 等现代身份验证模式实现安全的数据访问。

该库对于构建需要可靠存储的云原生应用程序至关重要。无论您是构建数据湖、内容管理系统还是备份工具,此 SDK 都能通过高级的 Python 抽象简化云通信的复杂性。

下载入口:https://github.com/openclaw/skills/tree/main/skills/thegovind/azure-storage-blob-py

安装与下载

1. ClawHub CLI

从源直接安装技能的最快方式。

npx clawhub@latest install azure-storage-blob-py

2. 手动安装

将技能文件夹复制到以下位置之一

全局模式~/.openclaw/skills/

工作区

/skills/ 优先级:工作区 > 本地 > 内置

3. 提示词安装

将此提示词复制到 OpenClaw 即可自动安装。

请帮我使用 Clawhub 安装 azure-storage-blob-py。如果尚未安装 Clawhub,请先安装(npm i -g clawhub)。

适用于 Python 的 Azure Blob Storage SDK 应用场景

- 直接将图像或文档提供给 Web 浏览器进行全球访问。

- 存储文件以供跨多个计算节点的分布式访问。

- 向客户端应用程序流式传输视频和音频内容。

- 写入日志文件并存档遥测数据以进行长期保存。

- 存储用于备份和恢复、灾难恢复及大数据分析的数据。

- 使用存储账户 URL 和 DefaultAzureCredential 等凭据初始化 BlobServiceClient。

- 通过获取 ContainerClient 来管理特定的数据桶,从而导航层次结构。

- 使用 BlobClient 访问单个对象以执行特定于文件的操作。

- 执行 upload_blob、download_blob 或 list_blobs 等核心操作来操作云数据流。

- 通过为大文件传输配置并发性和块大小来优化性能。

适用于 Python 的 Azure Blob Storage SDK 配置指南

通过命令行安装必要的软件包,开始使用 Openclaw Skills 进行存储:

pip install azure-storage-blob azure-identity

接下来,配置环境变量以启用身份验证:

AZURE_STORAGE_ACCOUNT_NAME=<您的存储账户名称>

AZURE_STORAGE_ACCOUNT_URL=https://<账户>.blob.core.windows.net

适用于 Python 的 Azure Blob Storage SDK 数据架构与分类体系

该 SDK 通过严格的三层层次结构组织数据,这对于构建 Openclaw Skills 工作流至关重要:

| 层级 | 对象 | 描述 |

|---|---|---|

| 账户 | BlobServiceClient |

用于账户范围设置和容器列表的顶级客户端。 |

| 容器 | ContainerClient |

代表一组 Blob 的命名空间(文件夹)。 |

| Blob | BlobClient |

代表容器内的单个文件或数据对象。 |

Blob 还支持自定义元数据(键值对)以及 Content-Type 和 Content-Encoding 等系统属性,以便进行浏览器优化。

name: azure-storage-blob-py

description: |

Azure Blob Storage SDK for Python. Use for uploading, downloading, listing blobs, managing containers, and blob lifecycle.

Triggers: "blob storage", "BlobServiceClient", "ContainerClient", "BlobClient", "upload blob", "download blob".

package: azure-storage-blob

Azure Blob Storage SDK for Python

Client library for Azure Blob Storage — object storage for unstructured data.

Installation

pip install azure-storage-blob azure-identity

Environment Variables

AZURE_STORAGE_ACCOUNT_NAME=

# Or use full URL

AZURE_STORAGE_ACCOUNT_URL=https://.blob.core.windows.net

Authentication

from azure.identity import DefaultAzureCredential

from azure.storage.blob import BlobServiceClient

credential = DefaultAzureCredential()

account_url = "https://.blob.core.windows.net"

blob_service_client = BlobServiceClient(account_url, credential=credential)

Client Hierarchy

| Client | Purpose | Get From |

|---|---|---|

BlobServiceClient |

Account-level operations | Direct instantiation |

ContainerClient |

Container operations | blob_service_client.get_container_client() |

BlobClient |

Single blob operations | container_client.get_blob_client() |

Core Workflow

Create Container

container_client = blob_service_client.get_container_client("mycontainer")

container_client.create_container()

Upload Blob

# From file path

blob_client = blob_service_client.get_blob_client(

container="mycontainer",

blob="sample.txt"

)

with open("./local-file.txt", "rb") as data:

blob_client.upload_blob(data, overwrite=True)

# From bytes/string

blob_client.upload_blob(b"Hello, World!", overwrite=True)

# From stream

import io

stream = io.BytesIO(b"Stream content")

blob_client.upload_blob(stream, overwrite=True)

Download Blob

blob_client = blob_service_client.get_blob_client(

container="mycontainer",

blob="sample.txt"

)

# To file

with open("./downloaded.txt", "wb") as file:

download_stream = blob_client.download_blob()

file.write(download_stream.readall())

# To memory

download_stream = blob_client.download_blob()

content = download_stream.readall() # bytes

# Read into existing buffer

stream = io.BytesIO()

num_bytes = blob_client.download_blob().readinto(stream)

List Blobs

container_client = blob_service_client.get_container_client("mycontainer")

# List all blobs

for blob in container_client.list_blobs():

print(f"{blob.name} - {blob.size} bytes")

# List with prefix (folder-like)

for blob in container_client.list_blobs(name_starts_with="logs/"):

print(blob.name)

# Walk blob hierarchy (virtual directories)

for item in container_client.walk_blobs(delimiter="/"):

if item.get("prefix"):

print(f"Directory: {item['prefix']}")

else:

print(f"Blob: {item.name}")

Delete Blob

blob_client.delete_blob()

# Delete with snapshots

blob_client.delete_blob(delete_snapshots="include")

Performance Tuning

# Configure chunk sizes for large uploads/downloads

blob_client = BlobClient(

account_url=account_url,

container_name="mycontainer",

blob_name="large-file.zip",

credential=credential,

max_block_size=4 * 1024 * 1024, # 4 MiB blocks

max_single_put_size=64 * 1024 * 1024 # 64 MiB single upload limit

)

# Parallel upload

blob_client.upload_blob(data, max_concurrency=4)

# Parallel download

download_stream = blob_client.download_blob(max_concurrency=4)

SAS Tokens

from datetime import datetime, timedelta, timezone

from azure.storage.blob import generate_blob_sas, BlobSasPermissions

sas_token = generate_blob_sas(

account_name="",

container_name="mycontainer",

blob_name="sample.txt",

account_key="", # Or use user delegation key

permission=BlobSasPermissions(read=True),

expiry=datetime.now(timezone.utc) + timedelta(hours=1)

)

# Use SAS token

blob_url = f"https://.blob.core.windows.net/mycontainer/sample.txt?{sas_token}"

Blob Properties and Metadata

# Get properties

properties = blob_client.get_blob_properties()

print(f"Size: {properties.size}")

print(f"Content-Type: {properties.content_settings.content_type}")

print(f"Last modified: {properties.last_modified}")

# Set metadata

blob_client.set_blob_metadata(metadata={"category": "logs", "year": "2024"})

# Set content type

from azure.storage.blob import ContentSettings

blob_client.set_http_headers(

content_settings=ContentSettings(content_type="application/json")

)

Async Client

from azure.identity.aio import DefaultAzureCredential

from azure.storage.blob.aio import BlobServiceClient

async def upload_async():

credential = DefaultAzureCredential()

async with BlobServiceClient(account_url, credential=credential) as client:

blob_client = client.get_blob_client("mycontainer", "sample.txt")

with open("./file.txt", "rb") as data:

await blob_client.upload_blob(data, overwrite=True)

# Download async

async def download_async():

async with BlobServiceClient(account_url, credential=credential) as client:

blob_client = client.get_blob_client("mycontainer", "sample.txt")

stream = await blob_client.download_blob()

data = await stream.readall()

Best Practices

- Use DefaultAzureCredential instead of connection strings

- Use context managers for async clients

- Set

overwrite=Trueexplicitly when re-uploading - Use

max_concurrencyfor large file transfers - Prefer

readinto()overreadall()for memory efficiency - Use

walk_blobs()for hierarchical listing - Set appropriate content types for web-served blobs

相关推荐

专题

+ 收藏

+ 收藏

+ 收藏

+ 收藏

+ 收藏

+ 收藏

最新数据

相关文章

阿里云大模型服务平台百炼新人免费额度如何申请?申请与使用免费额度教程及常见问题解答

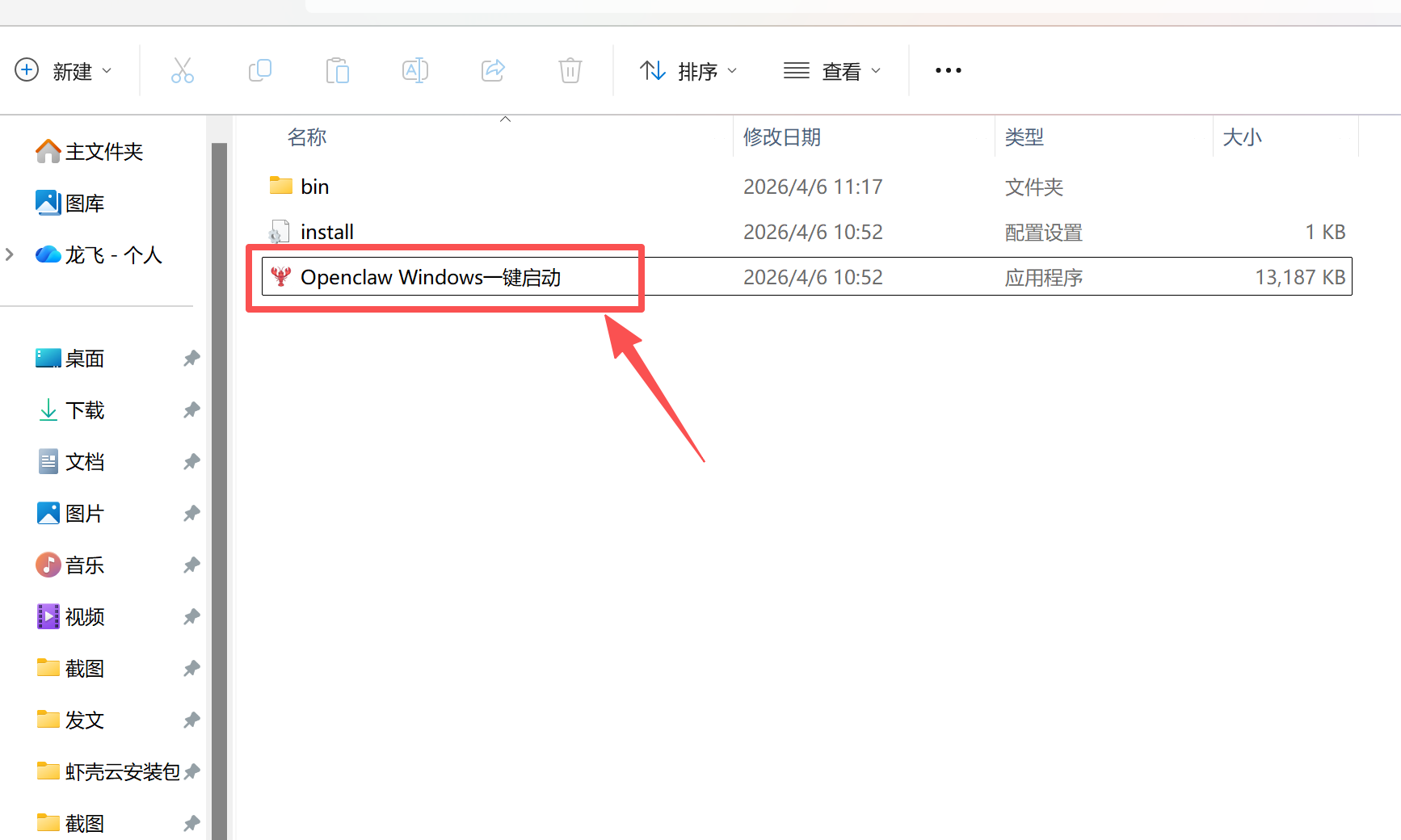

办公 AI 工具 OpenClaw 部署 Windows 系统一站式教程

Qwen3.6 正式发布!阿里云百炼同步开启“AI大模型节省计划”超值优惠

【新手零难度操作 】OpenClaw 2.6.4 安装误区规避与快速使用指南(包含最新版安装包)

OpenClaw 2.6.4 可视化部署 打造个人 AI 数字员工(包含最新版安装包)

【小白友好!】OpenClaw 2.6.4 本地 AI 智能体快速搭建教程(内有安装包)

零基础部署 OpenClaw v2.6.2,Windows 系统完整教程

【适合新手的】零基础部署 OpenClaw 自动化工具教程

开发者们的第一台自主进化的“爱马仕”来了

极简部署 OpenClaw 2.6.2 本地 AI 智能体快速启用(含最新版安装包)

AI精选