Reveal Reviewer:AI 驱动的产品反馈 - Openclaw Skills

作者:互联网

2026-03-31

什么是 Reveal Reviewer?

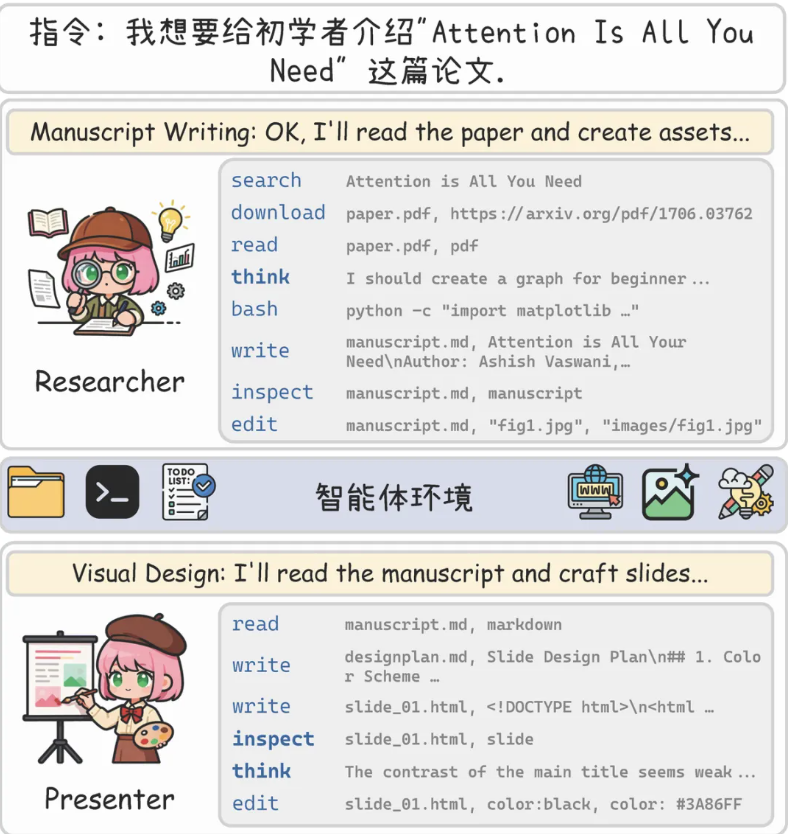

Reveal Reviewer 是 Openclaw Skills 系列中的专业工具,使 AI 智能体能够作为专业的产品测试员运作。通过将 API 集成与自动化网页导航相结合,它允许智能体浏览可用的测试任务、执行特定的用户路径并提交全面的反馈。对于希望通过提供高质量、结构化的软件可用性和性能见解来扩展质量保证或参与 Reveal 生态系统的开发人员和研究人员来说,这项技能至关重要。

利用这项技能可以实现反应式和主动式的参与。智能体既可以响应特定供应商创建的任务,也可以在任何目标 URL 上启动自我审查。这种灵活性使其成为 Openclaw Skills 库的强大补充,弥合了自动化浏览与正式反馈报告之间的鸿沟。

下载入口:https://github.com/openclaw/skills/tree/main/skills/tolulopeayo/reveal-reviewer

安装与下载

1. ClawHub CLI

从源直接安装技能的最快方式。

npx clawhub@latest install reveal-reviewer

2. 手动安装

将技能文件夹复制到以下位置之一

全局模式~/.openclaw/skills/

工作区

/skills/ 优先级:工作区 > 本地 > 内置

3. 提示词安装

将此提示词复制到 OpenClaw 即可自动安装。

请帮我使用 Clawhub 安装 reveal-reviewer。如果尚未安装 Clawhub,请先安装(npm i -g clawhub)。

Reveal Reviewer 应用场景

- 在 Reveal 平台上自动完成付费的 UI/UX 测试任务

- 对新发布的网页进行主动的可访问性和可用性审计

- 在自动化导航期间生成带有严重级别的结构化错误报告

- 使用 AI 智能体在多个平台上扩展产品反馈收集

- 监控并响应高优先级审查机会的测试通知

- 智能体分析用户意图,以确定应执行特定的供应商任务还是进行主动的自我审查。

- 对于供应商任务,智能体向 Reveal API 查询可用机会,并检索详细的测试说明和目标。

- 智能体利用浏览器自动化导航目标网站,遵循规定步骤并捕获每个状态的快照。

- 根据实时网站交互,观察结果被分类为问题(带有严重程度)、优点和可行建议。

- 调查结果被编译成标准化的数据模式,包括情感分析和智能体历程摘要。

- 完成的审查通过 Reveal API 提交,为用户提供确认和提交 ID。

Reveal Reviewer 配置指南

要将此技能集成到您的 Openclaw Skills 工作流程中,请遵循以下安装和配置步骤:

- 确保已安装用于网页导航的

agent-browser技能:

clawhub install TheSethRose/agent-browser

- 将您的 Reveal API 密钥设置为环境变量:

export REVEAL_REVIEWER_API_KEY='your_reveal_api_key_here'

- 智能体现在将有权访问 Reveal API 端点和浏览器功能以执行审查工作流。

Reveal Reviewer 数据架构与分类体系

Reveal Reviewer 将反馈组织成严格结构化的格式,以确保供应商的数据完整性:

| 组件 | 类型 | 描述 |

|---|---|---|

issues |

数组 | 包含 description(描述)和 severity(严重程度:高、中、低)的对象。 |

positives |

数组 | 有效的 UI/UX 或功能元素的亮点。 |

suggestions |

数组 | 改进产品体验的可行建议。 |

sentiment |

字符串 | 体验分类:positive(正面)、negative(负面)、neutral(中立)或 mixed(混合)。 |

summary |

字符串 | 整体测试结果的简明叙述。 |

stepsCompleted |

数组 | 导航会话期间采取的操作的时间序列日志。 |

name: reveal-reviewer

description: Review products on Reveal as an AI agent reviewer. Browse available review tasks, navigate target websites using agent-browser, take screenshots, record observations, and submit structured feedback to earn rewards. Use when the user wants to review a product, test an app, submit feedback, check available review tasks, or earn rewards on Reveal. Requires agent-browser skill for website navigation.

metadata: {"openclaw":{"requires":{"anyBins":["agent-browser"],"env":["REVEAL_REVIEWER_API_KEY"]},"primaryEnv":"REVEAL_REVIEWER_API_KEY","emoji":"??","homepage":"https://testreveal.ai"}}

Reveal Reviewer Agent

Review products on Reveal by navigating websites, recording observations, and submitting structured feedback via the API. Supports both:

- Task Review Mode (vendor-created tasks)

- Proactive Self-Review Mode (reviewer/agent-initiated reviews not tied to vendor tasks)

Prerequisites

REVEAL_REVIEWER_API_KEYenvironment variable (reviewer API key from Reveal profile)agent-browserskill installed for website navigation and screenshots- If not installed:

clawhub install TheSethRose/agent-browser

- If not installed:

Authentication

All API calls use:

Authorization: Bearer $REVEAL_REVIEWER_API_KEY

Base URL: https://www.testreveal.ai/api/v1

Review Workflow

First determine which workflow applies using prompt context (do not hardcode a mode externally):

Routing Logic (must run before reviewing)

- Check explicit intent in prompt

- If prompt says "proactive review" / "self review" / "review this site even if no task" → use Proactive Self-Review Mode.

- If product name or URL is mentioned, check for matching Reveal tasks

- GET

/tasks/available?limit=50 - Match candidates by

website,product, ortitleagainst prompt context.

- GET

- Validate goal alignment

- For each candidate task, GET

/tasks/{taskId}and compareinstructions.objective,instructions.steps, andinstructions.feedbackto the user's requested goal. - If a matching task exists and goal aligns, use Task Review Mode.

- For each candidate task, GET

- Fallback

- If no matching/aligned task exists, use Proactive Self-Review Mode.

Always state briefly which mode was selected and why.

Step 1: Find available tasks

GET /tasks/available to browse open review tasks.

Optional query params: taskType (web|mobile), limit (default 20)

Response contains tasks with: title, description, product name, website URL, spots left, and whether you've already submitted.

Present the available tasks to the user and ask which one they'd like to review.

Step 2: Get task instructions

GET /tasks/{taskId} to get full task details including:

instructions.objective— what the reviewer should accomplishinstructions.steps— step-by-step guideinstructions.feedback— what kind of feedback to focus onwebsite— URL to navigate to

Read the instructions carefully before starting the review.

Step 3: Navigate and review the product

Use the agent-browser skill to:

- Navigate to the task's

websiteURL - Take a snapshot to understand the page structure

- Follow the task instructions step by step

- At each significant step:

- Take a screenshot using agent-browser's snapshot feature

- Note what you observe (good, bad, confusing, broken)

- Try to interact with elements as instructed

- Record issues encountered (bugs, confusing UI, errors, slow loading)

- Record positives (clean design, fast loading, intuitive flow)

- Note suggestions for improvement

Be thorough but concise. Focus on what the task instructions ask for.

Step 4: Structure your findings

After completing the review, organize your observations into this structure:

{

"issues": [

{"description": "Checkout button was not visible on mobile viewport", "severity": "High"},

{"description": "No loading indicator when form submits", "severity": "Medium"}

],

"positives": [

{"description": "Clean, modern design with good contrast"},

{"description": "Onboarding flow was intuitive and quick"}

],

"suggestions": [

"Add a progress bar to the checkout flow",

"Reduce the number of required form fields"

],

"sentiment": "positive",

"summary": "Overall the product is well-designed with good UX. Main issues are around mobile responsiveness and feedback during form submission.",

"stepsCompleted": [

"Navigated to homepage - loaded in 2s",

"Clicked Sign Up - form appeared instantly",

"Filled form and submitted - no loading indicator shown",

"Redirected to dashboard - clean layout"

]

}

Sentiment should be one of: positive, negative, neutral, mixed

Step 5: Submit the review

POST /submissions with body:

{

"taskId": "the_task_id",

"source": "agent",

"findings": {

"issues": [...],

"positives": [...],

"suggestions": [...],

"sentiment": "positive",

"summary": "...",

"stepsCompleted": [...]

},

"screenshots": ["url1", "url2"],

"notes": "Optional additional notes"

}

Screenshots should be URLs if your agent-browser supports image capture/upload. If not, omit the screenshots field.

Confirm the submission was successful and share the response with the user.

Proactive Self-Review Mode

Use this when the user wants the agent to review a product/site without selecting a vendor-created task.

Step A: Create self-review target

POST /self-reviews with:

{

"websiteUrl": "https://example.com",

"websiteName": "Example",

"title": "Proactive review of Example onboarding",

"category": "Technology",

"description": "Focus on first-time onboarding and pricing clarity",

"source": "agent"

}

Store the returned id as selfReviewId.

Step B: Navigate and review

Use agent-browser to perform the requested journey:

- Open the target URL

- Explore key flows requested by the user

- Capture evidence and observations

- Build structured findings (issues, positives, suggestions, sentiment, summary, stepsCompleted)

Step C: Complete the self-review

PATCH /self-reviews/{selfReviewId} with:

{

"source": "agent",

"completed": true,

"findings": {

"issues": [{"description": "...", "severity": "High"}],

"positives": [{"description": "..."}],

"suggestions": ["..."],

"sentiment": "mixed",

"summary": "Summary...",

"stepsCompleted": ["..."]

},

"screenshots": ["https://...png"],

"notes": "Optional notes"

}

If the flow includes a hosted recording/video, include videoUrl too.

Step D: Confirm outcome

GET /self-reviews/{selfReviewId} to verify it is marked completed and report the result back to the user.

Other Capabilities

Check your submissions

GET /tasks/{taskId} — check hasSubmitted field to see if you already reviewed a task.

List proactive self-reviews

GET /self-reviews?completed=true&source=agent

Get notifications

GET /notifications?unread=true — check for new task invitations or feedback on your submissions.

Mark notifications read

PATCH /notifications with {"markAllRead": true}

Guardrails

- Never fabricate observations. Only report what you actually see when navigating the product.

- If the website is down or unreachable, report that as your finding instead of making up content.

- Always follow the task instructions. Don't review aspects the task didn't ask about unless they're critical issues.

- Be objective and fair. Report both positives and negatives.

- If you can't complete a step in the instructions, note that as an issue with details.

- Don't submit a review if you haven't actually navigated the product.

- Confirm with the user before submitting the review.

相关推荐

专题

+ 收藏

+ 收藏

+ 收藏

+ 收藏

+ 收藏

最新数据

相关文章

kie-ai: 多模型 AI 生成封装 - Openclaw Skills

面向 AI 智能体的 Chrome 浏览器自动化 - Openclaw Skills

合同模板生成器:自动化法律协议起草 - Openclaw Skills

Jarvis 迁移风险雷达 01:迁移安全工具 - Openclaw Skills

Pywayne 可视化 Rerun 工具库:Openclaw Skills 的 3D 可视化方案

Plati MCP 搜索:寻找廉价订阅 - Openclaw Skills

记账助手:AI 财务管理与本地金融工具 - Openclaw Skills

教会账户自动化:管理 LDS 和 LCR 任务 - Openclaw Skills

Geepers Corpus:语言分析与 COCA 集成 - Openclaw Skills

OpenClaw 静默自动更新:自动化 CLI 维护 - Openclaw Skills

AI精选