网络爬虫:利用 Openclaw Skills 实现自动化数据提取

作者:互联网

2026-04-12

什么是 网络爬虫?

网络爬虫是一款强大的数据提取工具,专为需要将非结构化 Web 内容转换为整洁、可用数据集的 Openclaw Skills 用户设计。通过利用智能浏览器技术,它可以处理 JavaScript 渲染内容、无限滚动和多页面导航等现代 Web 复杂场景。

无论您是在监控竞争对手、构建研究数据库还是跟踪电子商务趋势,此技能都能提供高效扩展数据收集工作所需的自动化能力。它通过提供用于 Web 交互的可编程接口,弥补了原始 HTML 与结构化情报之间的差距。

下载入口:https://github.com/openclaw/skills/tree/main/skills/yinanping-cpu/yinan-web-scraper

安装与下载

1. ClawHub CLI

从源直接安装技能的最快方式。

npx clawhub@latest install yinan-web-scraper

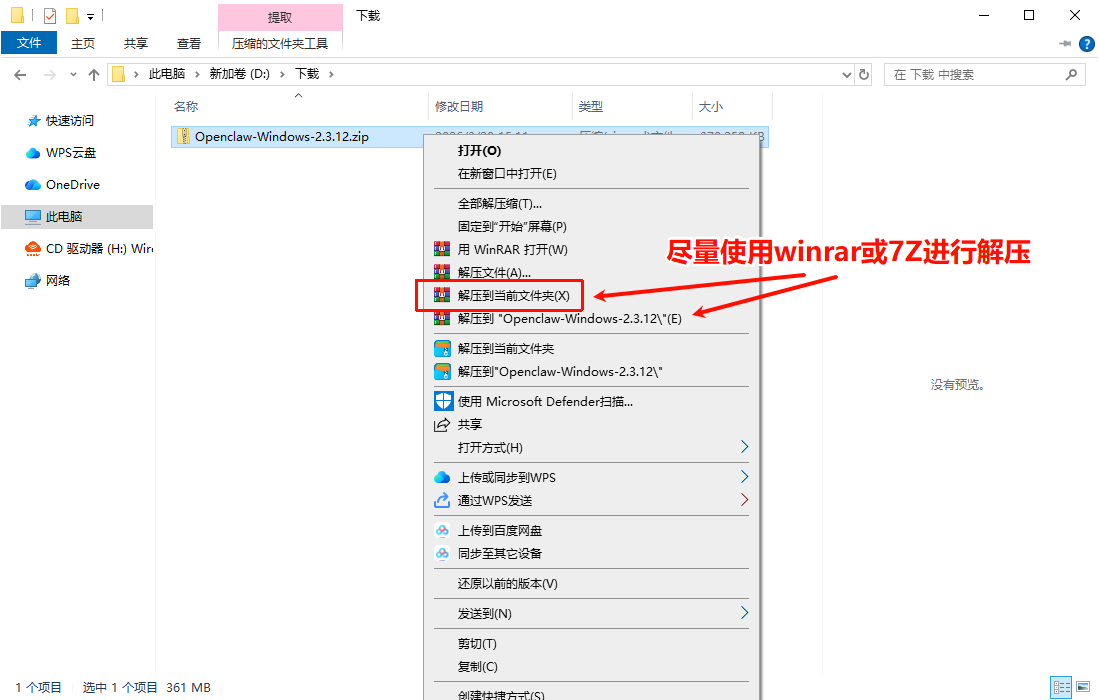

2. 手动安装

将技能文件夹复制到以下位置之一

全局模式~/.openclaw/skills/

工作区

/skills/ 优先级:工作区 > 本地 > 内置

3. 提示词安装

将此提示词复制到 OpenClaw 即可自动安装。

请帮我使用 Clawhub 安装 yinan-web-scraper。如果尚未安装 Clawhub,请先安装(npm i -g clawhub)。

网络爬虫 应用场景

- 监控电子商务产品列表、价格、评论和库存水平。

- 汇总房地产物业数据、价格趋势和经纪人联系信息。

- 从职业板块收集职位发布、薪资要求和招聘趋势。

- 抓取新闻文章、标题和发布日期,用于媒体和情绪分析。

- 从基于 Web 的商业列表构建商业目录和潜在客户名单。

- 确定目标 URL 以及您希望使用标准 CSS 选择器提取的具体数据点。

- 为任务选择专用脚本,例如单页、分页或无限滚动处理程序。

- 该技能利用浏览器自动化技术导航网站,执行 JavaScript 并等待动态内容加载。

- 根据您的定义对字段进行解析和映射,确保不同页面间的数据一致性。

- 结构化数据将导出到您选择的格式,包括 CSV、JSON 或 Excel,以便进行分析。

网络爬虫 配置指南

要开始使用这些 Openclaw Skills,请确保您的环境已就绪,并通过命令行执行脚本:

# 示例:抓取单个页面的产品数据

python scripts/scrape_page.py r

--url "https://example.com/products" r

--fields "title=h2.title,price=.price,link=a.href" r

--output products.csv

对于分页网站,请使用以下模式:

python scripts/scrape_paginated.py r

--url "https://example.com/products?page={page}" r

--pages 10 r

--fields "title,price,description" r

--output all_products.csv

网络爬虫 数据架构与分类体系

网络爬虫根据用户定义的字段名称和 CSS 选择器组织数据。它支持多种导出架构以适应您的数据管道:

| 格式 | 描述 |

|---|---|

| CSV | 扁平文件结构,非常适合电子表格软件和简单的数据导入。 |

| JSON | 层级结构,包括 scraped_at 等字段和嵌套对象数据。 |

| XLSX | 多工作表 Excel 文件,具有自动调整列宽和专业格式。 |

name: web-scraper

description: Extract structured data from websites using browser automation. Use when scraping product listings, articles, contact info, prices, or any web content. Supports single pages, pagination, infinite scroll, and dynamic content. Outputs to CSV, JSON, or Excel.

Web Scraper

Overview

Professional web scraping skill using agent-browser. Extract structured data from any website with support for JavaScript-rendered content, pagination, and complex selectors.

Use Cases

- E-commerce: Product listings, prices, reviews, inventory

- Real Estate: Property listings, prices, agent contacts

- Job Boards: Job postings, salaries, requirements

- News/Media: Articles, headlines, publication dates

- Directories: Business listings, contact information

- Competitor Monitoring: Prices, products, content changes

Quick Start

Scrape Single Page

python scripts/scrape_page.py r

--url "https://example.com/products" r

--fields "title= h2.title,price=.price,link=a.href" r

--output products.csv

Scrape with Pagination

python scripts/scrape_paginated.py r

--url "https://example.com/products?page={page}" r

--pages 10 r

--fields "title,price,description" r

--output all_products.csv

Scripts

scrape_page.py

Scrape a single page or static list.

Arguments:

--url- Target URL--fields- Field definitions (name=selector format, comma-separated)--output- Output file (CSV, JSON, or XLSX)--format- Output format (csv, json, xlsx)--wait- Wait time for dynamic content (seconds)

Field Definition Format:

fieldname=css_selector

Examples:

title=h1.product-title

price=.price-tag

description=.product-description

image=img.product-image.src

link=a.product-link.href

scrape_paginated.py

Scrape multiple pages with pagination.

Arguments:

--url- URL pattern (use {page} for page number)--pages- Number of pages to scrape--fields- Field definitions--output- Output file--delay- Delay between pages (seconds)--next-selector- CSS selector for "next page" button (alternative to URL pattern)

scrape_infinite_scroll.py

Scrape pages with infinite scroll loading.

Arguments:

--url- Target URL--scrolls- Number of scroll actions--fields- Field definitions--output- Output file--scroll-delay- Delay between scrolls (ms)

scrape_dynamic.py

Scrape JavaScript-heavy sites with custom interactions.

Arguments:

--url- Target URL--actions- JSON file with interaction sequence--fields- Field definitions--output- Output file

Configuration

Actions JSON Format (for dynamic scraping)

{

"actions": [

{"type": "click", "selector": "#load-more"},

{"type": "wait", "ms": 2000},

{"type": "scroll", "direction": "down", "pixels": 500},

{"type": "fill", "selector": "#search", "value": "keyword"},

{"type": "press", "key": "Enter"}

]

}

Output Formats

CSV:

title,price,link,url

"Product A",29.99,https://...,https://...

"Product B",39.99,https://...,https://...

JSON:

[

{

"title": "Product A",

"price": "29.99",

"link": "https://...",

"scraped_at": "2026-03-07T16:00:00"

}

]

Excel (XLSX):

- Same as CSV but with formatting options

- Multiple sheets support

- Auto-fit columns

Examples

Example 1: Scrape E-commerce Products

python scripts/scrape_paginated.py r

--url "https://example.com/shop?page={page}" r

--pages 5 r

--fields "name=.product-name,price=.price,rating=.stars,reviews=.review-count,url=a.href" r

--output products.csv r

--delay 3

Example 2: Scrape News Articles

python scripts/scrape_page.py r

--url "https://news-site.com/latest" r

--fields "headline=h2.article-title,summary=.article-summary,author=.byline,date=.publish-date,url=a.read-more.href" r

--output articles.json r

--format json

Example 3: Scrape Job Postings

python scripts/scrape_infinite_scroll.py r

--url "https://jobs-site.com/search" r

--scrolls 10 r

--fields "title=.job-title,company=.company-name,location=.location,salary=.salary,posted=.date-posted,url=a.job-link.href" r

--output jobs.csv r

--scroll-delay 1500

Example 4: Scrape Real Estate Listings

python scripts/scrape_paginated.py r

--url "https://realestate.com/listings?page={page}" r

--pages 20 r

--fields "address=.property-address,price=.listing-price,beds=.bedrooms,baths=.bathrooms,sqft=.square-feet,url=a.property-link.href" r

--output listings.xlsx r

--format xlsx r

--delay 5

Best Practices

- Respect robots.txt - Check and follow site rules

- Rate limiting - Add delays between requests (2-5s recommended)

- Error handling - Handle missing elements gracefully

- User-Agent - Use realistic browser headers

- Retry logic - Implement retries for failed requests

- Data validation - Validate extracted data before saving

- Storage - Save intermediate results for long scrapes

Anti-Scraping Measures

Some sites employ anti-scraping techniques:

| Measure | Countermeasure |

|---|---|

| IP blocking | Use proxies, rotate IPs |

| CAPTCHA | Manual solving or CAPTCHA services |

| Rate limiting | Increase delays, randomize timing |

| JavaScript challenges | Use browser automation (agent-browser) |

| Honeypot traps | Avoid hidden fields, validate selectors |

Legal Considerations

- Public data: Generally legal to scrape

- Terms of Service: Review site ToS before scraping

- Copyright: Don't republish copyrighted content

- Personal data: GDPR/privacy laws may apply

- Commercial use: May require permission

Disclaimer: This skill is for educational purposes. Users are responsible for compliance with applicable laws and website terms.

Troubleshooting

- Elements not found: Verify CSS selectors with browser dev tools

- Empty results: Check if content is JavaScript-rendered (use dynamic scraping)

- Timeout errors: Increase wait time or check network

- Blocked requests: Add delays, rotate user agents, or use proxies

- Incomplete data: Verify pagination or scroll handling

References

CSS Selector Guide

See references/css-selectors.md for comprehensive selector examples.

Common Website Patterns

See references/website-patterns.md for common HTML structures and selectors.

相关推荐

专题

+ 收藏

+ 收藏

+ 收藏

+ 收藏

+ 收藏

+ 收藏

最新数据

相关文章

文本翻译器:免费多语言免 API 翻译 - Openclaw Skills

主管提案者:主动式 AI 代理沟通 - Openclaw 技能

在线状态监控器:网站状态与性能 - Openclaw Skills

Secret Rotator:自动化 API 密钥审计与轮换 - Openclaw Skills

rey-x-api: 为 AI 智能体提供的安全 X API 集成 - Openclaw Skills

Polymarket 交易者:由 AI 驱动的预测市场自动化 - Openclaw Skills

LinkedIn 自动化:扩展 B2B 增长与内容 - Openclaw Skills

Rey 代码审查:自动化质量与安全审计 - Openclaw 技能

Reddit 发布助手:算法优化的社区参与 - Openclaw Skills

项目脚手架:自动化模板生成 - Openclaw Skills

AI精选