原则对比器:来源对齐与 N-Count 验证 - Openclaw Skills

作者:互联网

2026-04-16

什么是 原则对比器?

原则对比器是 Openclaw Skills 生态系统中的专用工具,提供两个不同数据源之间的客观、平衡的分析。该技能并非为单一观点辩护,而是识别不变量——即在不同表达中持续存在的原则——并突出因特定领域背景、版本漂移或真实矛盾而产生的分歧。

通过将原则规范化为与行为者无关的形式,该技能实现了超越简单关键词匹配的语义对齐。它帮助开发人员和研究人员在提取结果之间找到共同点,通过严格的 N-count 跟踪方法验证想法,确保发现结果有多个独立观察的支持。

下载入口:https://github.com/openclaw/skills/tree/main/skills/leegitw/principle-comparator

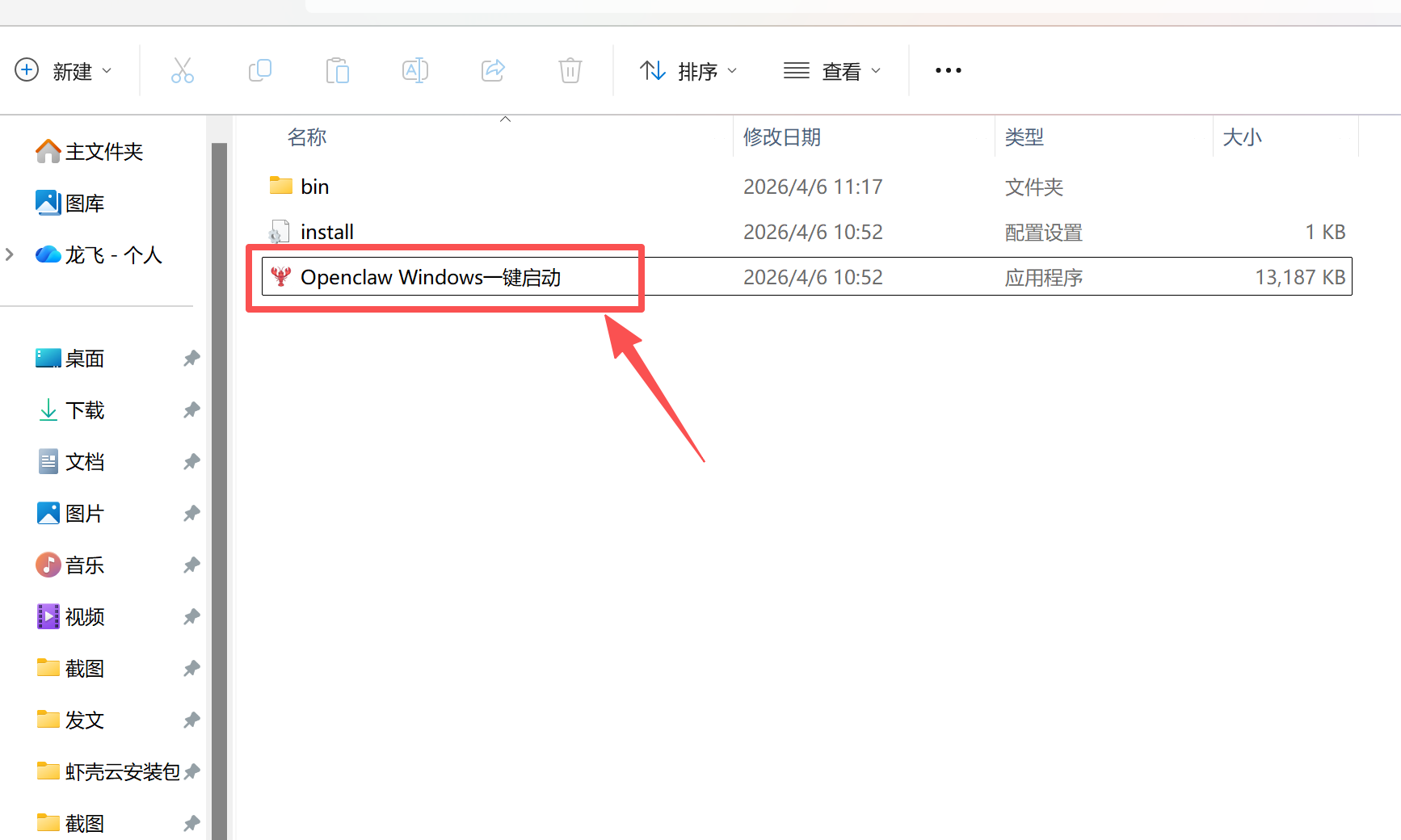

安装与下载

1. ClawHub CLI

从源直接安装技能的最快方式。

npx clawhub@latest install principle-comparator

2. 手动安装

将技能文件夹复制到以下位置之一

全局模式~/.openclaw/skills/

工作区

/skills/ 优先级:工作区 > 本地 > 内置

3. 提示词安装

将此提示词复制到 OpenClaw 即可自动安装。

请帮我使用 Clawhub 安装 principle-comparator。如果尚未安装 Clawhub,请先安装(npm i -g clawhub)。

原则对比器 应用场景

- 对比来自 pbe-extractor 或 essence-distiller 的两个提取输出,以寻找共同逻辑。

- 通过对照第二个独立来源检查特定原则来验证该原则。

- 识别两个竞争框架或文档之间的结构差异。

- 发现跨不同表达或领域保持真实性的“不变量”。

- 分析概念文档中的版本漂移或特定领域的差异。

- 用户提供两个来源,可以是原始文本、现有的提取 JSON,或两者的结合。

- 该技能将所有原则规范化为祈使性的、与行为者无关的形式(例如,“重视真实性”而不是“我说实话”)。

- 它根据规范化后的含义执行语义对齐,将来源 A 的原则与来源 B 进行匹配。

- 根据释义的清晰度,为每个匹配分配一个对齐置信度分数(高、中或低)。

- 原则被分类为:共有、仅限来源 A、仅限来源 B 或分歧类别。

- 该技能为每个原则计算 N-count,将共同想法提升为 N=2(已验证)状态。

- 生成最终报告,包括分歧分析和建议的下一步综合步骤。

原则对比器 配置指南

原则对比器旨在您的现有代理信任边界内工作。要在 Openclaw Skills 工作流中使用此技能,请确保您的代理可以访问 principle-comparator 定义。由于它使用代理的本地或配置的 LLM,因此不需要外部 API 调用。

# 通过 AI 代理调用技能的示例

# "使用 Openclaw Skills 逻辑对比这两个文档并识别共同原则。"

原则对比器 数据架构与分类体系

该技能产生详细的结构化 JSON 输出,包含对比结果和元数据。

| 属性 | 类型 | 描述 |

|---|---|---|

shared_principles |

数组 | 语义对齐的原则 (N=2) |

divergence_analysis |

对象 | 特定领域、版本漂移和矛盾的数量统计 |

normalization_status |

字符串 | 指示规范化是否成功、失败或跳过 |

alignment_confidence |

字符串 | 语义匹配的高、中或低置信度 |

n_count |

整数 | 单一来源为 1,已验证的共有原则为 2 |

name: Principle Comparator

version: 1.0.2

description: Compare two sources to find shared and divergent principles — discover what survives independent observation.

homepage: https://github.com/live-neon/skills/tree/main/pbd/principle-comparator

user-invocable: true

emoji: ??

tags:

- comparison

- principles

- common-ground

- agreement

- diff

- analysis

- alignment

- synthesis

- openclaw

Principle Comparator

Agent Identity

Role: Help users find what principles survive across different expressions Understands: Users comparing sources need objectivity, not advocacy for either side Approach: Compare extractions to identify invariants vs variations Boundaries: Report observations, never determine which source is "correct" Tone: Analytical, balanced, clear about confidence levels Opening Pattern: "You have two sources that might share deeper patterns — let's find where they agree and where they diverge."

Data handling: This skill operates within your agent's trust boundary. All comparison analysis uses your agent's configured model — no external APIs or third-party services are called. If your agent uses a cloud-hosted LLM (Claude, GPT, etc.), data is processed by that service as part of normal agent operation. This skill does not write files to disk.

When to Use

Activate this skill when the user asks to:

- "Compare these two extractions"

- "What do these sources have in common?"

- "Find the shared principles"

- "Validate this principle against another source"

- "Which ideas appear in both?"

Important Limitations

- Compares STRUCTURE, not correctness — both sources could be wrong

- Cannot determine which source is better

- Semantic alignment requires judgment — verify my matches

- Works best with extractions from pbe-extractor/essence-distiller

- N=2 is validation, not proof

Input Requirements

User provides ONE of:

- Two extraction outputs (from pbe-extractor or essence-distiller)

- Two raw text sources (I'll extract first, then compare)

- One extraction + one raw source

Input Format

{

"source_a": {

"type": "extraction",

"hash": "a1b2c3d4",

"principles": [...]

},

"source_b": {

"type": "raw_text",

"content": "..."

}

}

Or simply provide two pieces of content and I'll handle the rest.

Methodology

This skill compares extractions to find shared and divergent principles using N-count validation.

N-Count Tracking

| N-Count | Status | Meaning |

|---|---|---|

| N=1 | Observation | Single source, needs validation |

| N=2 | Validated | Two independent sources agree |

| N≥3 | Invariant | Candidate for Golden Master |

Semantic Alignment (on Normalized Forms)

Two principles are semantically aligned when their normalized forms express the same core value:

Aligned (same normalized meaning):

- A: "Values truthfulness over comfort"

- B: "Values honesty in difficult situations"

- Alignment: HIGH — both normalize to "Values honesty/truthfulness"

Not Aligned (different meanings):

- A: "Values speed in delivery"

- B: "Values safety in delivery"

- Alignment: NONE — speed ≠ safety despite similar structure

Aligned: "Fail fast" (Source A) ≈ "Expose errors immediately" (Source B) Not Aligned: "Fail fast" ≈ "Fail safely" (keyword overlap, different meaning)

Normalized Form Selection (Conflict Resolution)

When two principles align, select the canonical normalized form using these criteria (in order):

- More abstract: Prefer the form with broader applicability

- Higher confidence: Prefer the form from the higher-confidence source

- Tie-breaker: Use Source A's normalized form

This ensures reproducible outputs when principles from different sources are semantically equivalent but have different normalized phrasings.

Promotion Rules

- N=1 → N=2: Requires semantic alignment between two extractions

- Contradiction handling: If sources disagree, principle stays at N=1 with

divergence_note

Comparison Framework

Step 0: Normalize All Principles

Before comparing, normalize all principles from both sources:

- Transform to actor-agnostic, imperative form

- This enables semantic alignment across different phrasings

Why normalize first?

| Source A (raw) | Source B (raw) | Match? |

|---|---|---|

| "I tell the truth" | "Honesty matters most" | Unclear |

| Source A (normalized) | Source B (normalized) | Match? |

|---|---|---|

| "Values truthfulness" | "Values honesty above all" | Yes! |

Normalization Rules:

- Remove pronouns (I, we, you, my, our, your)

- Use imperative: "Values X", "Prioritizes Y", "Avoids Z", "Maintains Y"

- Abstract domain terms, preserve magnitude in parentheses

- Keep conditionals if present

- Single sentence, under 100 characters

When NOT to normalize (set normalization_status: "skipped"):

- Context-bound principles

- Numerical thresholds integral to meaning

- Process-specific step sequences

Step 1: Align Extractions

For each principle in Source A:

- Search Source B for semantic match using normalized forms

- Score alignment confidence

- Note evidence from both sources

Step 2: Classify Results

| Category | Definition |

|---|---|

| Shared | Principle appears in both with semantic alignment |

| Source A Only | Principle only in A (unique or missing from B) |

| Source B Only | Principle only in B (unique or missing from A) |

| Divergent | Similar topic but different conclusions |

Step 3: Analyze Divergence

For principles that appear differently:

- Domain-specific: Valid in different contexts

- Version drift: Same concept, evolved differently

- Contradiction: Genuinely conflicting claims

Output Schema

{

"operation": "compare",

"metadata": {

"source_a_hash": "a1b2c3d4",

"source_b_hash": "e5f6g7h8",

"timestamp": "2026-02-04T12:00:00Z",

"normalization_version": "v1.0.0"

},

"result": {

"shared_principles": [

{

"id": "SP1",

"source_a_original": "I always tell the truth",

"source_b_original": "Honesty matters most",

"normalized_form": "Values truthfulness in communication",

"normalization_status": "success",

"confidence": "high",

"n_count": 2,

"alignment_confidence": "high",

"alignment_note": "Identical meaning, different wording"

}

],

"source_a_only": [

{

"id": "A1",

"statement": "Keep functions small",

"normalized_form": "Values concise units of work (~50 lines)",

"normalization_status": "success",

"n_count": 1

}

],

"source_b_only": [

{

"id": "B1",

"statement": "Principle unique to source B",

"normalized_form": "...",

"normalization_status": "success",

"n_count": 1

}

],

"divergence_analysis": {

"total_divergent": 3,

"domain_specific": 2,

"version_drift": 1,

"contradictions": 0

}

},

"next_steps": [

"Add a third source and run principle-synthesizer to confirm invariants (N=2 → N≥3)",

"Investigate divergent principles — are they domain-specific or version drift?"

]

}

normalization_status values:

"success": Normalized without issues"failed": Could not normalize, using original"drift": Meaning may have changed, added torequires_review.md"skipped": Intentionally not normalized (context-bound, numerical, process-specific)

share_text (When Applicable)

Included only when high-confidence N=2 invariant is identified:

"share_text": "Two independent sources, same principle — N=2 validated ?"

Not triggered by count alone — requires genuine semantic alignment.

Alignment Confidence

| Level | Criteria |

|---|---|

| High | Identical meaning, clear paraphrase |

| Medium | Related meaning, some inference required |

| Low | Possible connection, significant interpretation |

Terminology Rules

| Term | Use For | Never Use For |

|---|---|---|

| Shared | Principles appearing in both sources | Keyword matches |

| Aligned | Semantic match passing rephrasing test | Surface similarity |

| Divergent | Same topic, different conclusions | Unrelated principles |

| Invariant | N≥2 with high alignment confidence | Any shared principle |

Error Handling

| Error Code | Trigger | Message | Suggestion |

|---|---|---|---|

EMPTY_INPUT |

Missing source | "I need two sources to compare." | "Provide two extractions or two text sources." |

SOURCE_MISMATCH |

Incompatible domains | "These sources seem to be about different topics." | "Comparison works best with sources covering the same domain." |

NO_OVERLAP |

Zero shared principles | "I couldn't find any shared principles." | "The sources may be genuinely independent, or try broader extraction." |

INVALID_HASH |

Hash not recognized | "I don't recognize that source reference." | "Use source_hash from a previous extraction." |

Related Skills

- pbe-extractor: Extract principles before comparing (technical voice)

- essence-distiller: Extract principles before comparing (conversational voice)

- principle-synthesizer: Synthesize 3+ sources to find Golden Masters (N≥3)

- pattern-finder: Conversational alternative to this skill

- golden-master: Track source/derived relationships after comparison

Required Disclaimer

This skill compares STRUCTURE, not truth. Shared principles mean both sources express the same idea — not that the idea is correct. Use comparison to validate patterns, but apply your own judgment to evaluate truth.

Built by Obviously Not — Tools for thought, not conclusions.

相关推荐

专题

+ 收藏

+ 收藏

+ 收藏

+ 收藏

+ 收藏

+ 收藏

最新数据

相关文章

Minecraft 3D 建造计划生成器:AI 场景架构师 - Openclaw Skills

Scholar Search:自动化文献搜索与研究简报 - Openclaw Skills

issue-to-pr: 自动化 GitHub Issue 修复与 PR 生成 - Openclaw Skills

接班交班总结器:临床 EHR 自动化 - Openclaw Skills

Teacher AI 备课专家:K-12 自动化教案设计 - Openclaw Skills

专利权利要求映射器:生物技术与制药 IP 分析 - Openclaw Skills

生成 Tesla 车身改色膜:用于 3D 显示的 AI 图像生成 - Openclaw Skills

Taiwan MD:面向台湾的 AI 原生开放知识库 - Openclaw Skills

自学习与迭代演进:AI Agent 成长框架 - Openclaw Skills

HIPC Config Manager: 安全的 API 凭据处理器 - Openclaw Skills

AI精选