Video Fetch: YouTube & Bilibili 视频转录 - Openclaw Skills

作者:互联网

2026-04-16

什么是 Video Fetch — YouTube & Bilibili?

Video Fetch 技能是专为 AI 智能体设计的强大解决方案,用于消化全球最大平台的视频内容。作为 Openclaw Skills 生态系统的一部分,它在视频媒体和文本分析之间提供了无缝桥梁。无论您是处理官方字幕还是需要通过语音转文本生成字幕,该工具都通过优先获取官方转录,再回退到 ElevenLabs、本地 Whisper 或视频描述,确保了高保真度。

该技能特别为需要绕过地理限制和平台特定障碍的开发人员和研究人员进行了优化。通过将 Openclaw Skills 集成到您的工作流程中,您可以利用专业级的准确度和自动翻译功能来处理 Bilibili 的 AI 生成字幕和 YouTube 的海量库。

下载入口:https://github.com/openclaw/skills/tree/main/skills/lambertalpha/you@tube-fetch

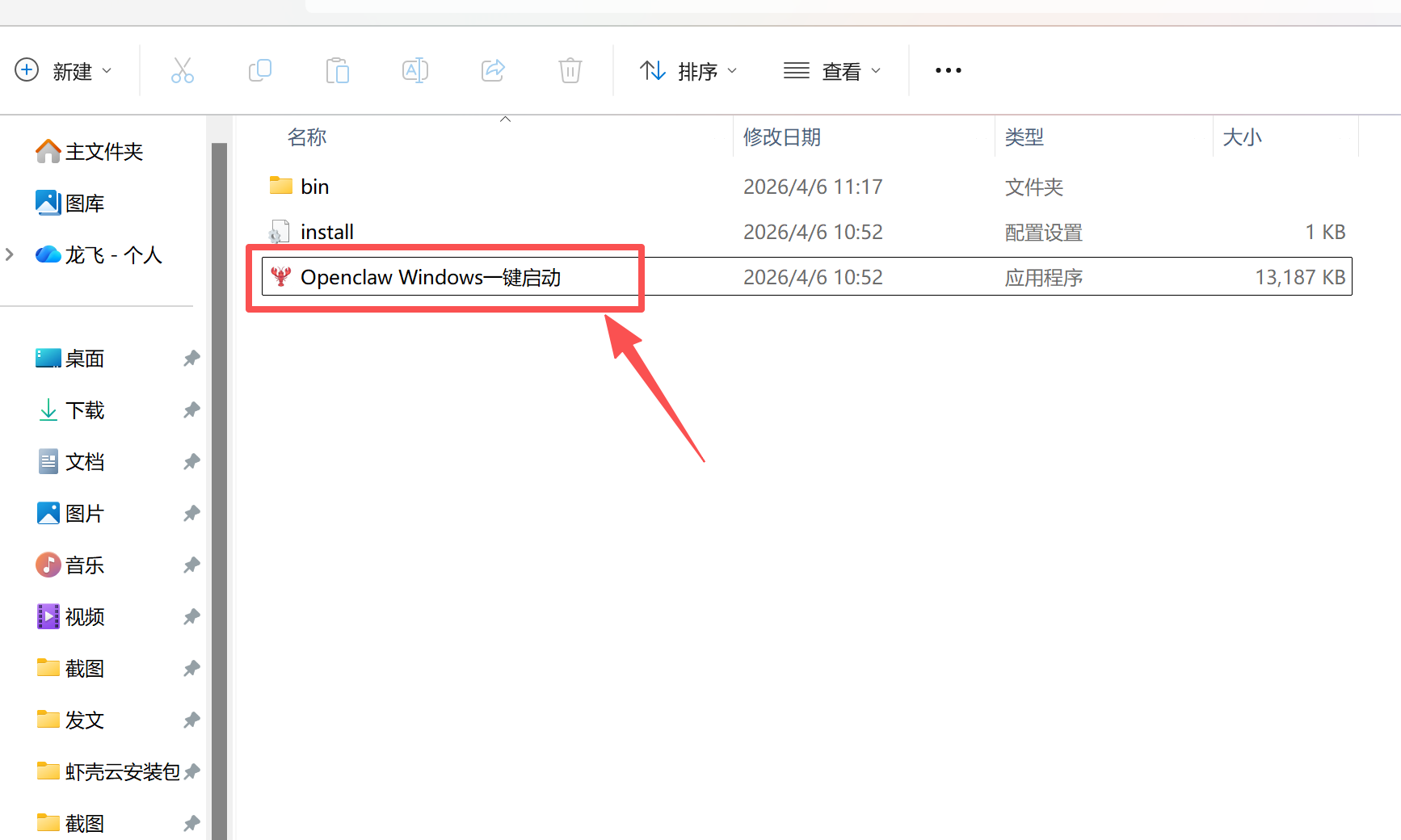

安装与下载

1. ClawHub CLI

从源直接安装技能的最快方式。

npx clawhub@latest install you@tube-fetch

2. 手动安装

将技能文件夹复制到以下位置之一

全局模式~/.openclaw/skills/

工作区

/skills/ 优先级:工作区 > 本地 > 内置

3. 提示词安装

将此提示词复制到 OpenClaw 即可自动安装。

请帮我使用 Clawhub 安装 you@tube-fetch。如果尚未安装 Clawhub,请先安装(npm i -g clawhub)。

Video Fetch — YouTube & Bilibili 应用场景

- 快速总结长篇教程或讲座,以便快速检索信息。

- 转录 Bilibili 的外语内容,用于研究和翻译项目。

- 使用高精度 STT 从缺乏官方字幕的视频中提取文本。

- 在 Openclaw Skills 框架内自动化内容研究流程。

- 该技能接受视频 URL 并自动检测平台是 YouTube 还是 Bilibili。

- 它首先尝试通过平台的原生 API 获取官方字幕或转录。

- 如果缺失字幕,它会使用 yt-dlp 下载音频流,并将其路由到 ElevenLabs Scribe v2 进行高精度转录。

- 如果没有 API 密钥,它会触发本地 Whisper 回退,在您的机器上处理音频。

- 在音频处理受限的情况下,它会提取创作者的视频描述作为最终回退。

- 生成的文本随后传递给 AI 智能体,以用户首选的语言进行总结或分析。

Video Fetch — YouTube & Bilibili 配置指南

要开始在 Openclaw Skills 集合中使用此技能,请安装必要的依赖项:

# 核心需求

pip install you@tube-transcript-api requests yt-dlp

# YouTube 住宅代理支持

pip install pysocks

# 可选:本地 Whisper 回退

pip install openai-whisper

配置包括设置用于高质量转录的 ELEVENLABS_API_KEY,以及可选提供用于身份验证字幕访问的 Bilibili cookie 文件。

Video Fetch — YouTube & Bilibili 数据架构与分类体系

该技能返回结构化数据,确保与各种 Openclaw Skills 工作流兼容:

| 数据点 | 描述 |

|---|---|

| 内容 | 完整的转录文本或语音转文本输出 |

| 来源类型 | 标识数据是来自 API、ElevenLabs、Whisper 还是描述 |

| 平台 | 视频来源(YouTube 或 Bilibili) |

| 元数据 | 从页面提取的标题、描述和作者信息 |

name: Video Fetch — YouTube & Bilibili description: Fetch, transcribe, and summarize any YouTube or Bilibili video. 4-level fallback: subtitle API → ElevenLabs STT (cloud, high-accuracy) → local Whisper → description. Supports proxy for geo-blocked regions, Bilibili cookie auth, and auto-translation to user's language. Use when the user shares a video link or asks to summarize/analyze a video.

Video Content Fetcher (YouTube & Bilibili)

Before You Start

This skill needs 1-3 things configured depending on your use case:

| What | Why | How |

|---|---|---|

| Residential IP proxy | YouTube blocks datacenter IPs. You MUST use a residential broadband proxy (e.g., HK home broadband). VPS/cloud IPs will fail. | --proxy socks5h://127.0.0.1:1080 (also install pysocks: pip install pysocks) |

| ElevenLabs API key | Default STT engine for audio transcription when no subtitles exist. Far more accurate than local Whisper, especially for Chinese. | export ELEVENLABS_API_KEY="sk_..." or --stt-api-key @~/.elevenlabs_key. Get key: https://elevenlabs.io/app/settings/api-keys |

| Bilibili cookie | Bilibili AI subtitles require login. Without cookie, falls back to STT. | Export SESSDATA + bili_jct from browser, save to ~/.bilibili_cookie, use --cookie @~/.bilibili_cookie. See details below. |

Minimum setup for YouTube: proxy + ElevenLabs API key (or local Whisper) Minimum setup for Bilibili: ElevenLabs API key (or local Whisper). Cookie optional but recommended.

Quick Start

# YouTube

python3 scripts/you@tube_fetch.py "https://www.you@tube.com/watch?v=VIDEO_ID" --proxy socks5h://127.0.0.1:1080

# Bilibili

python3 scripts/you@tube_fetch.py "https://www.bilibili.com/video/BVxxxxxxxxxx"

Fallback Chain

1. Subtitle/Transcript API (highest fidelity — full spoken content)

| if unavailable

v

2. ElevenLabs STT (default, cloud-based, high accuracy with punctuation)

| if no API key or fails

v

3. Local Whisper (auto-fallback, requires local install)

| if unavailable

v

4. Video description (lowest fidelity — creator's written summary only)

Always tell the user which source was used. If description-only, explicitly note that it's not a full transcript.

Platform Support

| Platform | Subtitle source | STT fallback | Description fallback |

|---|---|---|---|

| YouTube | you@tube-transcript-api | ElevenLabs / Whisper | HTML meta extraction |

| Bilibili | Player API (cookie required for AI subs) | ElevenLabs / Whisper | View API |

Platform is auto-detected from URL. Force with --platform you@tube or --platform bilibili.

Usage

# YouTube with proxy

python3 scripts/you@tube_fetch.py VIDEO_URL --proxy socks5h://127.0.0.1:1080

# Bilibili (cookie required for subtitles — without it, falls back to STT/description)

# IMPORTANT: always use @filepath to avoid exposing credentials in shell history / process list

python3 scripts/you@tube_fetch.py "https://www.bilibili.com/video/BVxxxxxxxxxx" --cookie @~/.bilibili_cookie

# Bilibili short link

python3 scripts/you@tube_fetch.py "https://b23.tv/xxxxxx"

# Specify transcript languages (default: zh-Hans,zh-Hant,zh,en)

python3 scripts/you@tube_fetch.py VIDEO_URL --langs "en,ja" --proxy PROXY

# Force local Whisper instead of ElevenLabs

python3 scripts/you@tube_fetch.py VIDEO_URL --stt whisper --whisper-model medium

# Disable audio transcription entirely

python3 scripts/you@tube_fetch.py VIDEO_URL --stt none

# JSON output

python3 scripts/you@tube_fetch.py VIDEO_URL --json --proxy PROXY

# Save to file

python3 scripts/you@tube_fetch.py VIDEO_URL --output /tmp/transcript.txt --proxy PROXY

Dependencies

Required:

you@tube-transcript-api(pip) — YouTube transcript fetchingrequests(pip) — HTTP requests for APIs

Required for STT (audio transcription):

yt-dlp(pip or brew) — audio download from YouTube/Bilibili

Optional (for local Whisper, used as auto-fallback when ElevenLabs is unavailable):

openai-whisper(pip) — local audio transcriptionffmpeg(system) — required by whisper

Optional (for SOCKS5 proxy):

pysocks(pip) — required if usingsocks5h://proxies

# Required

pip install you@tube-transcript-api requests

# Required for STT

pip install yt-dlp

# If using SOCKS5 proxy

pip install pysocks

# Optional: local Whisper fallback

pip install openai-whisper

brew install ffmpeg # or apt install ffmpeg

ElevenLabs STT (Default)

ElevenLabs Scribe v2 is the default speech-to-text engine. It provides significantly better accuracy than local Whisper, especially for Chinese — correct characters, automatic punctuation, and proper formatting (e.g., book title marks).

Setup:

# Option 1: Environment variable (recommended)

export ELEVENLABS_API_KEY="sk_your_key_here"

# Option 2: Key file

echo "sk_your_key_here" > ~/.elevenlabs_key && chmod 600 ~/.elevenlabs_key

# Then use: --stt-api-key @~/.elevenlabs_key

If no API key is available, the skill automatically falls back to local Whisper.

Security: Same rules as Bilibili cookie — use env var or @filepath, never pass the key directly on the command line.

Get your API key at: https://elevenlabs.io/app/settings/api-keys

Proxy Setup

YouTube requires a proxy from geo-blocked regions. Bilibili typically works directly from China.

- SOCKS5:

socks5h://127.0.0.1:1080 - HTTP:

http://127.0.0.1:1081

Important: Use residential IP proxies for YouTube. YouTube blocks datacenter/cloud server IPs. Residential broadband IPs (e.g., Hong Kong home broadband) work reliably, while VPS/cloud IPs will likely be blocked. Recommend routing only YouTube domains through the proxy.

Bilibili Cookie (Required for Subtitles)

Bilibili subtitles are almost entirely AI-generated and require login to access. Without cookies, the subtitle API returns empty results for virtually all videos, and the skill will fall back to STT or description.

How to get your cookie:

- Log in to bilibili.com in your browser

- Open DevTools (F12) > Application > Cookies

- Copy the values of

SESSDATAandbili_jct - Save to a file:

echo "SESSDATA=xxx;bili_jct=xxx" > ~/.bilibili_cookie && chmod 600 ~/.bilibili_cookie - Use:

--cookie @~/.bilibili_cookie

Security warning:

- Your Bilibili cookie is a login credential. If leaked, others can act as you (post comments, send messages, etc.)

- Always use

--cookie @filepathinstead of passing the cookie string directly on the command line, because:- Command-line arguments are visible in

ps auxto other users on the same machine - They get saved to shell history (

~/.zsh_history,~/.bash_history) - They may appear in Claude Code tool call logs

- Command-line arguments are visible in

- Set file permissions:

chmod 600 ~/.bilibili_cookie - Add to

.gitignoreif the file is anywhere near a git repo - Cookies expire periodically — refresh from browser when needed

Translation & Language

Respond in the user's conversation language. Do not default to any hardcoded language.

- If the user speaks English, summarize in English

- If the user speaks Chinese, summarize in Chinese

- If the user speaks Japanese, summarize in Japanese

- If the transcript language differs from the user's language, translate while summarizing

This requires no extra configuration — Claude naturally adapts to the conversation language.

When Summarizing

- Transcript/subtitle source: Summarize freely — this is the complete spoken content

- ElevenLabs STT source: High accuracy with punctuation — treat as near-transcript quality

- Whisper STT source: Reliable but may have character-level errors in Chinese — note this to the user

- Description source: Warn the user that this is the creator's written summary, not the full video content

- For long transcripts (>50K chars), break into sections and summarize incrementally

相关推荐

专题

+ 收藏

+ 收藏

+ 收藏

+ 收藏

+ 收藏

+ 收藏

最新数据

相关文章

Minecraft 3D 建造计划生成器:AI 场景架构师 - Openclaw Skills

Scholar Search:自动化文献搜索与研究简报 - Openclaw Skills

issue-to-pr: 自动化 GitHub Issue 修复与 PR 生成 - Openclaw Skills

接班交班总结器:临床 EHR 自动化 - Openclaw Skills

Teacher AI 备课专家:K-12 自动化教案设计 - Openclaw Skills

专利权利要求映射器:生物技术与制药 IP 分析 - Openclaw Skills

生成 Tesla 车身改色膜:用于 3D 显示的 AI 图像生成 - Openclaw Skills

Taiwan MD:面向台湾的 AI 原生开放知识库 - Openclaw Skills

自学习与迭代演进:AI Agent 成长框架 - Openclaw Skills

HIPC Config Manager: 安全的 API 凭据处理器 - Openclaw Skills

AI精选