智能体工作量评估:为 AI 提供准确的任务范围界定 - Openclaw Skills

作者:互联网

2026-04-16

什么是 智能体工作量评估?

智能体工作量评估(Agent Work Estimation)技能是一种技术协议,旨在解决大型语言模型中常见的“人类时间锚定偏差”。当智能体评估任务时,它们往往会复制人类开发者论坛中的时间线,导致对几分钟即可完成的任务产生巨大的过度评估。通过利用 Openclaw 技能库中的这一框架,智能体将重点放在工具调用轮次(推理、编码和验证的特定循环)上,从而提供现实的技术工作量评估。

该技能强制执行自下而上的计算,将抽象的复杂性转化为具体的运行单元。通过采用这种方法,团队可以更好地将 AI 智能体的工作流与实际项目需求对齐,确保每一次评估都由技术逻辑支撑,而非通用的训练数据直觉。对于任何希望通过 Openclaw 技能将 AI 智能体集成到专业项目管理流程中的开发者来说,这都是一个必不可少的组件。

下载入口:https://github.com/openclaw/skills/tree/main/skills/hjw21century/agent-estimation

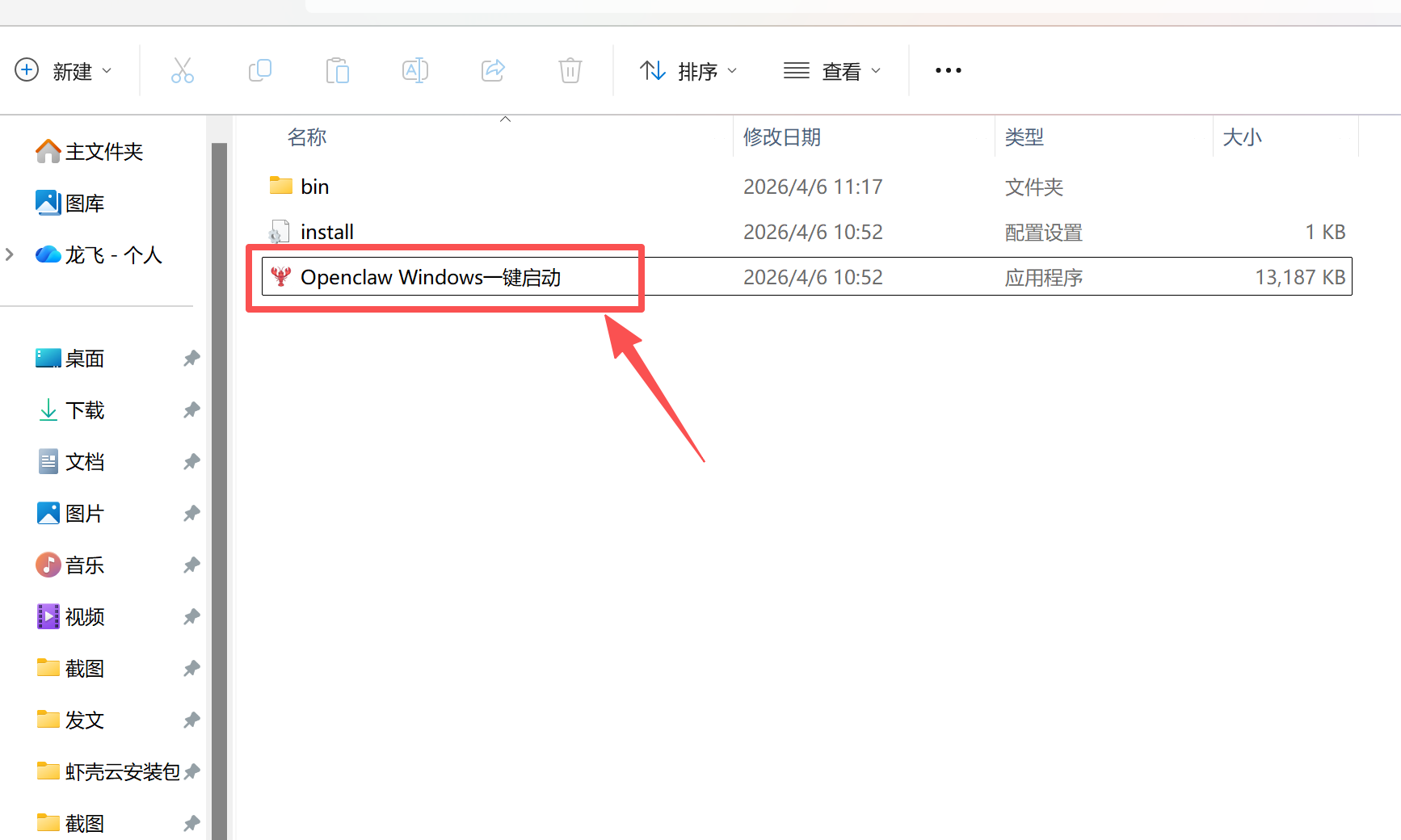

安装与下载

1. ClawHub CLI

从源直接安装技能的最快方式。

npx clawhub@latest install agent-estimation

2. 手动安装

将技能文件夹复制到以下位置之一

全局模式~/.openclaw/skills/

工作区

/skills/ 优先级:工作区 > 本地 > 内置

3. 提示词安装

将此提示词复制到 OpenClaw 即可自动安装。

请帮我使用 Clawhub 安装 agent-estimation。如果尚未安装 Clawhub,请先安装(npm i -g clawhub)。

智能体工作量评估 应用场景

- 规划新功能开发,其中智能体需要提供交付时间表。

- 划定复杂的重构任务范围,以识别潜在的逻辑瓶颈。

- 通过分解清理所需的执行轮数来评估技术债务。

- 在协作编码过程中向人类利益相关者传达现实的预期。

- 分解:智能体将主要任务分解为独立的、功能性的模块,这些模块可以单独构建和测试。

- 基础轮次评估:根据复杂度模式为每个模块分配工具调用轮数,从样板任务(1-2 轮)到高不确定性项目(8-15 轮)不等。

- 风险分配:对每个模块应用风险系数(1.0 到 2.0),以考虑文档缺失、平台特性或集成未知因素。

- 总量计算:智能体汇总有效轮数,并增加 10-20% 的集成缓冲,以考虑模块间的连接工作。

- 挂钟时间转换:将最终轮数乘以每轮耗时因子(通常为 3 分钟),以提供人类可读的持续时间。

智能体工作量评估 配置指南

要在您的智能体环境中实现此逻辑,请在系统指令中包含评估程序或将其作为实用技能引用。除了以下提示逻辑外,不需要任何外部依赖项:

# 激活智能体评估框架

# 为智能体定义原子“轮次”单位

# 将默认挂钟转换设置为 3 分钟

智能体工作量评估 数据架构与分类体系

该技能使用结构化分类法组织评估数据,以确保不同 Openclaw 技能实现之间的一致性:

| 属性 | 类型 | 描述 |

|---|---|---|

| 轮次 | 单位 | 原子循环:思考 -> 编写 -> 执行 -> 验证 -> 修复。 |

| 模块 | 组件 | 由 2-15 轮组成的逻辑单元。 |

| 风险系数 | 浮点数 | 基于生态系统成熟度和文档的乘数(1.0 - 2.0)。 |

| 集成因子 | 百分比 | 为模块连接添加的 10-20% 的基础总量开销。 |

| 挂钟时间 | 时长 | 轮次到人类分钟的最终转换。 |

name: agent-estimation

description: Accurately estimate AI agent work effort using the agent's own operational units (tool-call rounds) instead of human time. Use when asked to estimate, scope, plan, or evaluate how long a coding task will take. Prevents the common failure mode where agents anchor to human developer timelines and massively overestimate. Outputs a structured breakdown with round counts, risk factors, and a final wallclock conversion.

Agent Work Estimation Skill

Problem

AI coding agents systematically overestimate task duration because they anchor to human developer timelines absorbed from training data. A task an agent can complete in 30 minutes gets estimated as "2-3 days" because that's what a human developer forum post would say.

Solution

Force the agent to estimate from its own operational units — tool-call rounds — and only convert to human wallclock time at the very end.

Core Units

| Unit | Definition | Scale |

|---|---|---|

| Round | One tool-call cycle: think → write code → execute → verify → fix | ~2-4 min wallclock |

| Module | A functional unit built from multiple rounds until usable | 2-15 rounds |

| Project | All modules + integration + debugging | Sum of modules × integration factor |

A Round is the atomic unit. It maps directly to one iteration of:

- Agent reasons about what to do

- Agent writes/edits code

- Agent runs the code or a test

- Agent reads the output

- Agent decides if it needs to fix something (if yes → next round)

Estimation Procedure

When asked to estimate a task, follow these steps in order:

Step 1: Decompose into Modules

Break the task into functional modules. Each module should be independently buildable and testable. Ask yourself: "What are the distinct pieces I would build one at a time?"

Step 2: Estimate Rounds per Module

For each module, estimate the number of rounds using these anchors:

| Pattern | Typical Rounds | Examples |

|---|---|---|

| Boilerplate / known pattern | 1-2 | CRUD endpoint, config file, standard API client |

| Moderate complexity | 3-5 | Custom UI layout, state management, data pipeline |

| Exploratory / under-documented | 5-10 | Unfamiliar framework, platform-specific APIs, complex integrations |

| High uncertainty | 8-15 | Undocumented behavior, novel algorithms, multi-system debugging |

Key calibration rules:

- If you can generate the code in one shot and it will likely run → 1 round

- If you'll need to generate, run, see an error, and fix → 2-3 rounds

- If the library/framework has sparse docs and you'll be guessing → 5+ rounds

- If it involves platform permissions, OS-level APIs, or environment-specific behavior the user must manually verify → add 2-3 rounds

Step 3: Assign Risk Coefficients

Each module gets a risk coefficient that inflates its round count:

| Risk Level | Coefficient | When to Apply |

|---|---|---|

| Low | 1.0 | Mature ecosystem, clear docs, agent has strong pattern match |

| Medium | 1.3 | Minor unknowns, may need 1-2 extra debug rounds |

| High | 1.5 | Sparse docs, platform quirks, integration unknowns |

| Very High | 2.0 | Possible dead ends, may need to change approach entirely |

Step 4: Calculate Totals

Module effective rounds = base rounds × risk coefficient

Project rounds = Σ(module effective rounds) + integration rounds

Integration rounds = 10-20% of base total (for wiring modules together)

Step 5: Convert to Wallclock Time

Only at the very end, convert to human time:

Wallclock time = project rounds × minutes_per_round

Default minutes_per_round = 3 minutes (includes agent generation time + user review time).

Adjust this parameter based on context:

- Fast iteration, user barely reviews → 2 min/round

- Complex domain, user carefully reviews each step → 4 min/round

- User needs to manually test (mobile, hardware, permissions) → 5 min/round

Output Format

Always output the estimation in this exact structure:

### Task: [task name]

#### Module Breakdown

| # | Module | Base Rounds | Risk | Effective Rounds | Notes |

|---|--------|------------|------|-----------------|-------|

| 1 | ... | N | 1.x | M | why |

| 2 | ... | N | 1.x | M | why |

#### Summary

- **Base rounds**: X

- **Integration**: +Y rounds

- **Risk-adjusted total**: Z rounds

- **Estimated wallclock**: A – B minutes (at N min/round)

#### Biggest Risks

1. [specific risk and what could blow up the estimate]

2. [...]

Anti-Patterns to Avoid

These are the failure modes this skill exists to prevent:

- Human-time anchoring: "A developer would take about 2 weeks..." → NO. Start from rounds.

- Padding by vibes: Adding time "just to be safe" without specific risk rationale → NO. Use risk coefficients.

- Confusing complexity with volume: 500 lines of boilerplate ≠ hard. One line of CGEvent API ≠ easy. Estimate by uncertainty, not line count.

- Forgetting integration cost: Modules work alone but break together. Always add integration rounds.

- Ignoring user-side bottlenecks: If the user must manually grant permissions, restart an app, or test on a device, that's extra round time. Adjust

minutes_per_round, don't add phantom rounds.

Calibration Reference

Here are example projects with known round counts to help calibrate:

See references/calibration-examples.md for detailed examples across project types.

Eval Prompts

See evals/evals.json for test cases to validate estimation accuracy.

相关推荐

专题

+ 收藏

+ 收藏

+ 收藏

+ 收藏

+ 收藏

+ 收藏

最新数据

相关文章

Minecraft 3D 建造计划生成器:AI 场景架构师 - Openclaw Skills

Scholar Search:自动化文献搜索与研究简报 - Openclaw Skills

issue-to-pr: 自动化 GitHub Issue 修复与 PR 生成 - Openclaw Skills

接班交班总结器:临床 EHR 自动化 - Openclaw Skills

Teacher AI 备课专家:K-12 自动化教案设计 - Openclaw Skills

专利权利要求映射器:生物技术与制药 IP 分析 - Openclaw Skills

生成 Tesla 车身改色膜:用于 3D 显示的 AI 图像生成 - Openclaw Skills

Taiwan MD:面向台湾的 AI 原生开放知识库 - Openclaw Skills

自学习与迭代演进:AI Agent 成长框架 - Openclaw Skills

HIPC Config Manager: 安全的 API 凭据处理器 - Openclaw Skills

AI精选